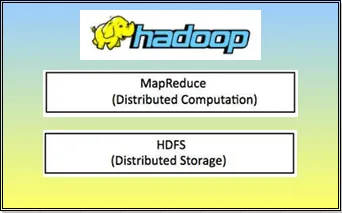

Hadoop is an open-source software framework to store data and process this data on clusters. It provides massive storage for any kind of data, huge processing power and the ability to handle limitless parallel tasks or jobs. It is enormously popular and there are two fundamental things to understand here - how it stores data and how it processes data.

Hadoop can store data in a cluster system- through HDFS (Hadoop Distributed File System) which is a part of hadoop. Imagine a file that was larger than your pc’s capacity. Hadoop lets you store files bigger than what can be stored in one particular server or node. It lets you store many files with large volume of data. And there are multiple nodes/servers out there. For example the internet giant like Yahoo uses hadoop which uses thousands of nodes for its operation.

The second characteristic of hadoop is its ability to process data through Map Reduce in a unique way. It processes all the data on the nodes. The traditional method takes longer time to process huge data sets. But in case of Hadoop, it moves the processing software to where the data is and it distributes the processing through a technique called mapping thus reducing the answer likewise and in less time.